Temperature & Entropy: Difference between revisions

No edit summary |

Tag: Undo |

||

| Line 1: | Line 1: | ||

Claimed by Josh Brandt (April 19th, Spring 2022) | |||

<blockquote> | <blockquote> | ||

| Line 6: | Line 6: | ||

<p>— Stephen Hawking</p> | <p>— Stephen Hawking</p> | ||

Entropy is a fundamental characteristic of a system: highly related to the topic of Energy. It is often described as a quantitative measure of disorder. Its formal definition makes it a measure of how many ways there is to distribute energy into a system. What makes Entropy so important is its connection to the Second Law of Thermodynamics, which states | |||

What makes | |||

<blockquote> | <blockquote> | ||

<p>The | <p>The entropy of a system never decreases spontaneously.</p> | ||

</blockquote> | </blockquote> | ||

Entropy is highly related to [[Application of Statistics in Physics]], since it is | Entropy is highly related to [[Application of Statistics in Physics]], since it is a result of probability. The Second Law of Thermodynamics rests upon the fact that it is always more likely for entropy to increase, since it is more likely for an outcome to occur if there are more ways which it can. On macroscopic scales, the chances of entropy decrease as non-zero, but are so small as to never be observed. | ||

The concept of Entropy is famous for its proof against the existence of Perpetual Motion Machines; disqualifying their ability to maintain order. The concept has a long and ambiguous history, but in recent science it has taken on a formal and integral definition. | |||

The | ==The Main Idea== | ||

===A Mathematical Model=== | ===A Mathematical Model=== | ||

The fundamental | The fundamental relationship between Temperature <math>T</math>, Energy <math>E</math> and Entropy <math> S \equiv k_B \ln\Omega</math> is <math>\frac{dS}{dE}=\frac{1}{T} </math>. | ||

In order to understand and predict the behavior of solids, we can employ the Einstein Model. This simple model treats interatomic Coulombic force as a spring, justified by the often highly parabolic potential energy curve near the equilibrium in models of interatomic attraction (see Morse Potential, Buckingham Potential, and Lennard-Jones potential). In this way, one can imagine a cubic lattice of spring-interconnected atoms, with an imaginary cube centered around each one slicing each and every spring in half. | |||

A quantum mechanical harmonic oscillator has quantized energy states, with one quanta being a unit of energy <math>q=\hbar \omega_0 </math>. These quanta can be distributed among a solid by going into anyone of the three half-springs attached to each atom. In fact, the number of ways to distribute <math>q</math> quanta of energy between <math>N</math> oscillators is <math>\Omega = \frac{(q+N-1)!}{q!(N-1)!} </math>. From this, it is clear that entropy is intrinsically related to the number of ways to distribute energy in a system. | |||

From here, it is almost intuitive that systems should evolve to increased entropy over time. Following the fundamental postulate of thermodynamics: in the long-term steady state, all microstates have equal probability, we can see that a macrostate which includes more microstates is the most likely endpoint for a sufficiently time-evolved system. Having many microstates implies having many different ways to distribute energy, and thus high entropy. It should make sense that the Second Law of Thermodynamics states that "the entropy of an isolated system tends to increase through time". | |||

The treatment of a large number of particle is the study of Statistical Mechanics, whose applications to Physics are discussed in another page ([[Application of Statistics in Physics]]). It is apparent that Thermodynamics and Statistical Mechanics have become linked as the atomic behavior behind thermodynamic properties was discovered. | |||

===A Computational Model=== | |||

Entropy can be understood by watching the diffusion of gas in the following simulation: | |||

https://phet.colorado.edu/sims/html/gas-properties/latest/gas-properties_en.html | |||

This simulation connects two essential interpretations of Entropy. Firstly, notice that over time, although the gases begin separated, they end completely mixed. One could easily say that the disorder of the system increased over time, meaning the Entropy increased. Another way to look at it is from the perspective of statistics. There are more configurations where the particles can be disordered than ordered: there's only a couple ways all of the particles can be packed in to one corner, but uncountable ways they can be randomly dispersed. So left to its own, it is exceedingly likely to observe the particles reach a steady-state of perfect diffusion: where they are as mixed as possible. There's simply more ways for this to happen: it is the overwhelmingly likely outcome. | |||

==Examples== | |||

===Simple=== | |||

Question: Given that entropy is commonly applied to objects at everyday scales, explain why Boltzmann's definition of entropy is appropriate. | |||

<math> | Solution: A pencil has a mass <math>\mathcal{O}(1 \text{ gram})</math>. Pencils are made of wood and graphite, so let's say a pencil is 100% Carbon. Carbon's molar mass is <math>12 \frac{\text{gram}}{\text{mole}} </math>. So there's <math>\frac{1}{12}</math> mole of Carbon atoms in a pencil. Thats <math>\frac{6 \times 10^{23}}{12}</math> Carbon atoms. For each atom, there are three oscillating springs under the Einstein Model, so there is <math>\mathcal{O}(10^{23})</math> oscillators, <math>N</math>. Obviously, <math>\Omega</math> will quickly become unmanageably large. Taking <math>\ln{10^{23}}</math> we get only <math>52</math>. To give appropriate units, we need a constant to multiply, which <math> k_B </math> gives. It should make sense why working with entropy on everyday scales is manageable under this definition. | ||

===Middling=== | |||

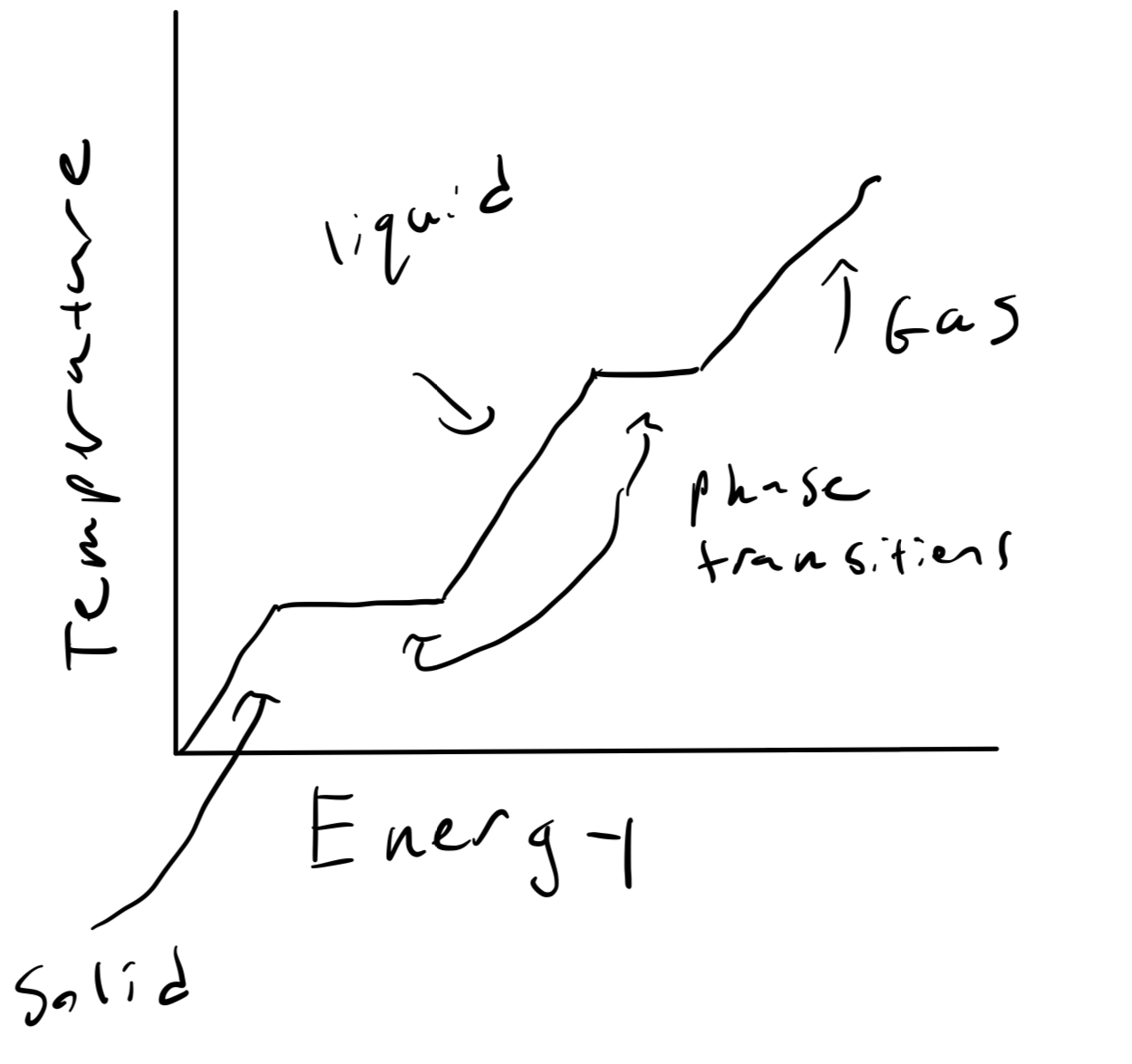

Question: During a phase transition, a material's temperature does not increase: for example, a solid melting into a liquid or a liquid freezing into a solid. Explain how this is consistent with the equations of entropy. | |||

[[File:Phases.jpeg]] | |||

<math>\ | Solution: Entropy satisfies <math>\frac{dS}{dE} = \frac{1}{T} </math>. When a solid melts into a liquid, there is obviously energy flowing into it, and all things held constant the liquid has more entropy than the solid. So as energy is being dumped in during the transition, entropy is increasing, so <math>\frac{dS}{dE} > 0 </math>. But notice that this does not mean the temperature is increasing. <math>T</math> can be a constant while entropy changes with respect to energy. So this is consistent. | ||

=== | ===Difficult=== | ||

Question: Show that there are <math>\Omega</math> ways to distribute <math>q</math> indistinguishable quanta between <math>N</math> oscillators where <math>\Omega = \frac{(N+q-1)!}{q!(N-1)!} </math> | |||

Solution: | |||

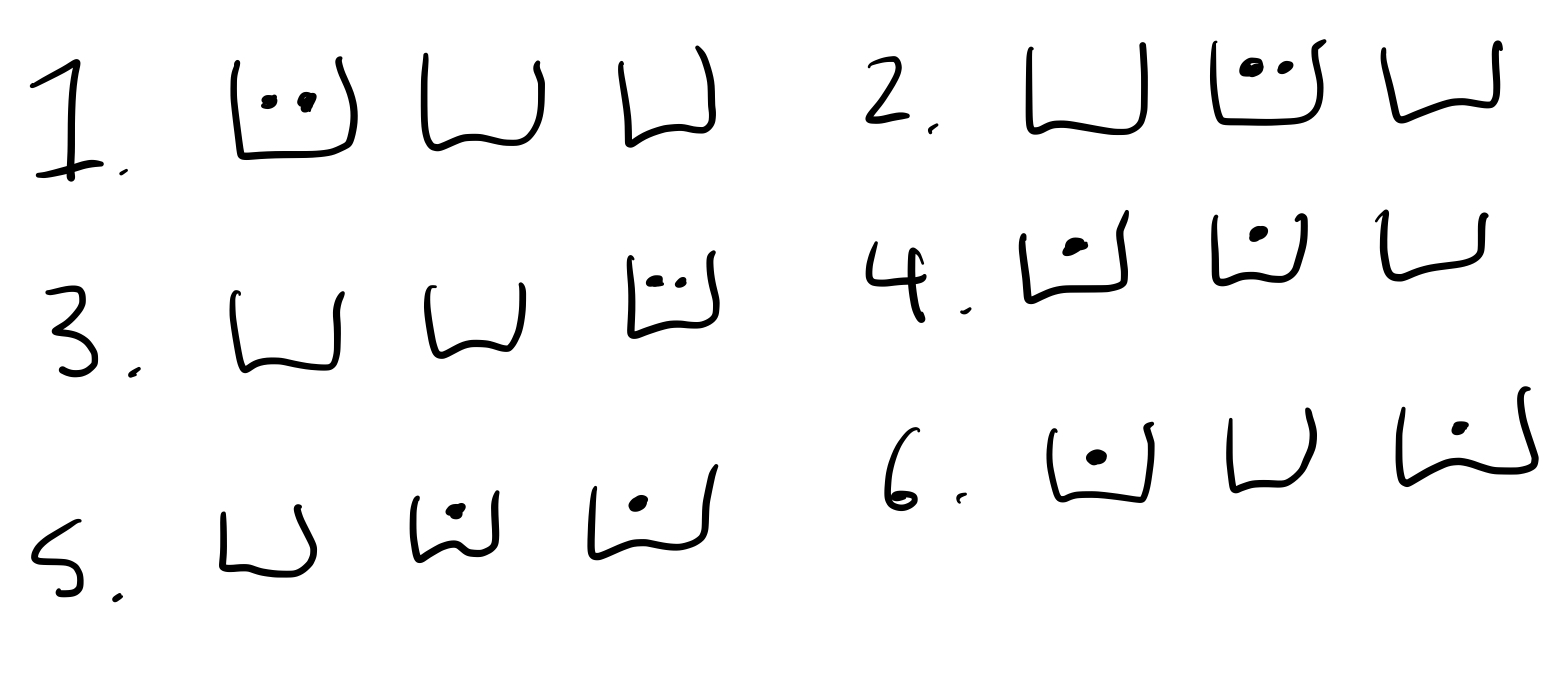

For illustration, let us first pick the <math>N=3</math> and <math>q=2</math> case and imagine the oscillators as buckets and the quanta as dots. The way we can distribute the quanta into the oscillators is shown below schematically | |||

[[File:Quanta_buckes.jpeg]] | |||

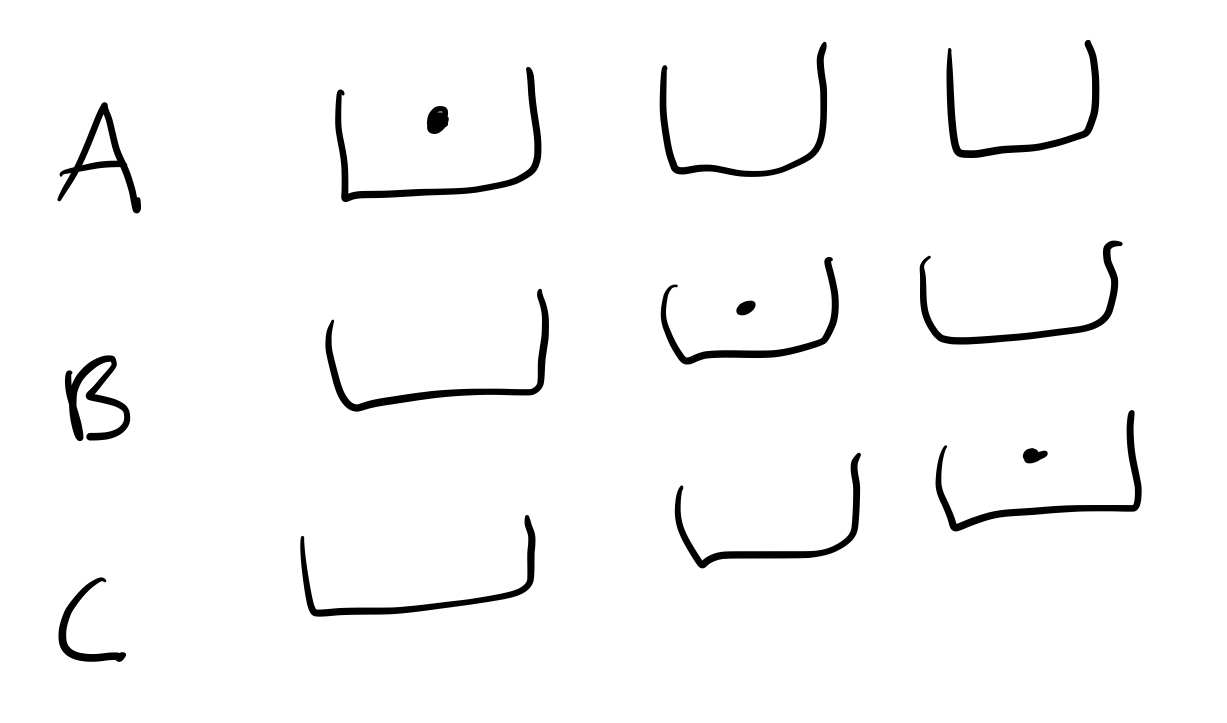

Instead of drawing it out, we can try and create a code that describes each configuration (triple bucket + dot set-up). Let's first imagine creating a "basis", something like this: | |||

[[File:Basis.jpeg]] | |||

With this basis, we could say that the above case 4 can be written as AB. Case 5 is BC and case 6 is AC. Keep in mind that since quanta are indistinguishable, AC and CA are equivalent and so are every other reordering. To designate two dots in one bucket, we can introduce a third letter, D. A D means to add another ball into the bucket in the combination with it, so case 1 is AD. All possible cases are AD, BD, CD, AB, BC, AC. Notice that these are all of the combinations of two letters from the set A,B,C,D. | |||

' | Now let's take the more general case. If we have <math>N</math> buckets, we need at least <math>N</math> basis buckets. But, each bucket can have <math>q-1</math> extra dots inside, in addition to the single basis bucket. So, to represent all combinations, we need <math>N+q-1</math> "letters". In this scheme, we might say that each additional letter after the basis means to add an extra dot to the letter alphabetically the same with respect to its set to keep things unique. So if the basis is A,B,C then D means add an extra to A, and E would mean add an extra to C. | ||

<math> | The amount of ways to distribute the dots is now <math>N+q-1 \choose q </math>, since we can pick sets of <math>q</math> letters from the <math>N+q-1</math> possibilities. | ||

<math>{N+q-1 \choose q} = \frac{(N+q-1)!}{q!(N-1)!}=\Omega</math> | |||

===MATLAB Code=== | |||

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%% | |||

% You may change one of these 2 variables, keep in mind once q is over 100 | |||

% It may be hard to compute | |||

q = 10; % Quanta of energy | |||

N = 3; % Oscillators | |||

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%% | |||

= | f = @(q,N)((factorial(q + (N - 1)))/(factorial(q) * factorial(N-1))); | ||

y = []; | |||

for i = 1:q | |||

y1 = f(i,N); | |||

y = [y y1]; | |||

end | |||

x = [1:q]; | |||

plot(x,y); | |||

xlim([1 q]); | |||

ylim([0, y(end)]); | |||

xlabel('q (Quanta of Energy)'); | |||

'' | ylabel('\Omega (Multiplicity)'); | ||

title('Multiplicity vs. Energy Quanta'); | |||

box on | |||

grid on; | |||

==Connectedness== | |||

#How is this topic connected to something that you are interested in? | |||

' | I am very interested in astrophysics, and there are very interesting cosmological analyses of entropy. For example, why do planets and stars form if they're more ordered than the interstellar medium they come from? Is the entropy of the universe really increasing? These topics have implications far beyond classical scales. | ||

#How is it connected to your major? | |||

I'm a Physics major. | |||

#Is there an interesting industrial application? | |||

'' | I'm a Physics major. But, I'll still answer: of course! You might say entropy is the opposing force of efficiency. In history, it was discovered in the context of heat loss with work by Carnot. If we could create infinite-machines, the world would have considerably less problems. | ||

Entropy is highly related to the concept of [[Quantized energy levels]]. The idea that energy can only be distributed in quanta between atoms is essential to understanding the idea of how many ways you can distribute energy in a system. If energy was continuous inside of atoms, there would be infinite ways to distribute energy into any system with more than one "oscillator" in the Einstein Model. | |||

Entropy | |||

==History== | ==History== | ||

The concepts of | The concepts of Temperature, Entropy and Energy have been linked throughout history. Historical notions of Heat described it as a particle, such as [[Sir Isaac Newton]] even believing it to have mass. In 1803, Lazare Carnot (father of [[Nicolas Leonard Sadi Carnot]]) formalized the idea that energy cannot be perfectly channeled: disorder is an intrinsic property of energy transformation. In the mid 1800s, [[Rudolf Clausius]] mathematically described a "transformation-content" of energy loss during any thermodynamic process. From the Greek word τροπή pronounced as "tropey" meaning "change", the prefix of "en"ergy was added onto the term when in 1865 entropy as we call it today was introduced. Clausius himself said <blockquote>I prefer going to the ancient languages for the names of important scientific quantities, so that they maymean the same thing in all living tongues. I propose, therefore, to call S the entropy of a body, after the Greek word "transformation". I have designedly coined the word entropy to be similar to energy, for these two quantities are so analogous in their physical significance, that an analogy of denominations seems to me helpful.</blockquote> Two decades later, Ludwig Boltzmann established the connection between entropy and the number of states of a system, introducing the equation we use today and the famous Boltzmann Constant: the first major idea introduced to the modern field of statistical thermodynamics. [1] | ||

In the | |||

<blockquote | |||

</blockquote> | |||

==Notable Scientists== | ==Notable Scientists== | ||

*[[Josiah Willard Gibbs]] | |||

*[[James Prescott Joule]] | |||

*[[Nicolas Leonard Sadi Carnot]] | |||

*[[Rudolf Clausius]] | |||

*[[Ludwig Boltzmann]] | |||

== See also == | |||

==See also== | |||

All thermodynamics is linked to the concept of | All thermodynamics is linked to the concept of Energy. See also Heat, Temperature, Statistical Mechanics, Spontaneity. For Fun, see the [https://en.wikipedia.org/wiki/Heat_death_of_the_universe Heat Death of the Universe] | ||

===Further reading=== | ===Further reading=== | ||

* [[Application of Statistics in Physics]] | *[[Application of Statistics in Physics]] | ||

* [[Quantized energy levels]] | *[[Quantized energy levels]] | ||

* [[Electronic Energy Levels and Photons]] | *[[Electronic Energy Levels and Photons]] | ||

===External links=== | ===External links=== | ||

* [https://en.wikipedia.org/wiki/ | *[https://en.wikipedia.org/wiki/Energy Energy] | ||

* [https://en.wikipedia.org/wiki/ | *[https://en.wikipedia.org/wiki/Thermodynamic_equilibrium Thermodynamics] | ||

* [https://en.wikipedia.org/wiki/ | *[https://en.wikipedia.org/wiki/Conservation_of_energy Conservation of Energy] | ||

* [https:// | *[https://fs.blog/entropy/ Entropy] | ||

==References== | ==References== | ||

1. “Entropy.” Wikipedia, Wikimedia Foundation, 23 Apr. 2022, https://en.wikipedia.org/wiki/Entropy | |||

[[Category:Statistical Physics]] | [[Category:Statistical Physics]] | ||

Latest revision as of 19:49, 26 April 2026

Claimed by Josh Brandt (April 19th, Spring 2022)

The increase of ... entropy is what distinguishes the past from the future, giving a direction to time.

— Stephen Hawking

Entropy is a fundamental characteristic of a system: highly related to the topic of Energy. It is often described as a quantitative measure of disorder. Its formal definition makes it a measure of how many ways there is to distribute energy into a system. What makes Entropy so important is its connection to the Second Law of Thermodynamics, which states

The entropy of a system never decreases spontaneously.

Entropy is highly related to Application of Statistics in Physics, since it is a result of probability. The Second Law of Thermodynamics rests upon the fact that it is always more likely for entropy to increase, since it is more likely for an outcome to occur if there are more ways which it can. On macroscopic scales, the chances of entropy decrease as non-zero, but are so small as to never be observed.

The concept of Entropy is famous for its proof against the existence of Perpetual Motion Machines; disqualifying their ability to maintain order. The concept has a long and ambiguous history, but in recent science it has taken on a formal and integral definition.

The Main Idea

A Mathematical Model

The fundamental relationship between Temperature [math]\displaystyle{ T }[/math], Energy [math]\displaystyle{ E }[/math] and Entropy [math]\displaystyle{ S \equiv k_B \ln\Omega }[/math] is [math]\displaystyle{ \frac{dS}{dE}=\frac{1}{T} }[/math].

In order to understand and predict the behavior of solids, we can employ the Einstein Model. This simple model treats interatomic Coulombic force as a spring, justified by the often highly parabolic potential energy curve near the equilibrium in models of interatomic attraction (see Morse Potential, Buckingham Potential, and Lennard-Jones potential). In this way, one can imagine a cubic lattice of spring-interconnected atoms, with an imaginary cube centered around each one slicing each and every spring in half.

A quantum mechanical harmonic oscillator has quantized energy states, with one quanta being a unit of energy [math]\displaystyle{ q=\hbar \omega_0 }[/math]. These quanta can be distributed among a solid by going into anyone of the three half-springs attached to each atom. In fact, the number of ways to distribute [math]\displaystyle{ q }[/math] quanta of energy between [math]\displaystyle{ N }[/math] oscillators is [math]\displaystyle{ \Omega = \frac{(q+N-1)!}{q!(N-1)!} }[/math]. From this, it is clear that entropy is intrinsically related to the number of ways to distribute energy in a system.

From here, it is almost intuitive that systems should evolve to increased entropy over time. Following the fundamental postulate of thermodynamics: in the long-term steady state, all microstates have equal probability, we can see that a macrostate which includes more microstates is the most likely endpoint for a sufficiently time-evolved system. Having many microstates implies having many different ways to distribute energy, and thus high entropy. It should make sense that the Second Law of Thermodynamics states that "the entropy of an isolated system tends to increase through time".

The treatment of a large number of particle is the study of Statistical Mechanics, whose applications to Physics are discussed in another page (Application of Statistics in Physics). It is apparent that Thermodynamics and Statistical Mechanics have become linked as the atomic behavior behind thermodynamic properties was discovered.

A Computational Model

Entropy can be understood by watching the diffusion of gas in the following simulation:

https://phet.colorado.edu/sims/html/gas-properties/latest/gas-properties_en.html

This simulation connects two essential interpretations of Entropy. Firstly, notice that over time, although the gases begin separated, they end completely mixed. One could easily say that the disorder of the system increased over time, meaning the Entropy increased. Another way to look at it is from the perspective of statistics. There are more configurations where the particles can be disordered than ordered: there's only a couple ways all of the particles can be packed in to one corner, but uncountable ways they can be randomly dispersed. So left to its own, it is exceedingly likely to observe the particles reach a steady-state of perfect diffusion: where they are as mixed as possible. There's simply more ways for this to happen: it is the overwhelmingly likely outcome.

Examples

Simple

Question: Given that entropy is commonly applied to objects at everyday scales, explain why Boltzmann's definition of entropy is appropriate.

Solution: A pencil has a mass [math]\displaystyle{ \mathcal{O}(1 \text{ gram}) }[/math]. Pencils are made of wood and graphite, so let's say a pencil is 100% Carbon. Carbon's molar mass is [math]\displaystyle{ 12 \frac{\text{gram}}{\text{mole}} }[/math]. So there's [math]\displaystyle{ \frac{1}{12} }[/math] mole of Carbon atoms in a pencil. Thats [math]\displaystyle{ \frac{6 \times 10^{23}}{12} }[/math] Carbon atoms. For each atom, there are three oscillating springs under the Einstein Model, so there is [math]\displaystyle{ \mathcal{O}(10^{23}) }[/math] oscillators, [math]\displaystyle{ N }[/math]. Obviously, [math]\displaystyle{ \Omega }[/math] will quickly become unmanageably large. Taking [math]\displaystyle{ \ln{10^{23}} }[/math] we get only [math]\displaystyle{ 52 }[/math]. To give appropriate units, we need a constant to multiply, which [math]\displaystyle{ k_B }[/math] gives. It should make sense why working with entropy on everyday scales is manageable under this definition.

Middling

Question: During a phase transition, a material's temperature does not increase: for example, a solid melting into a liquid or a liquid freezing into a solid. Explain how this is consistent with the equations of entropy.

Solution: Entropy satisfies [math]\displaystyle{ \frac{dS}{dE} = \frac{1}{T} }[/math]. When a solid melts into a liquid, there is obviously energy flowing into it, and all things held constant the liquid has more entropy than the solid. So as energy is being dumped in during the transition, entropy is increasing, so [math]\displaystyle{ \frac{dS}{dE} \gt 0 }[/math]. But notice that this does not mean the temperature is increasing. [math]\displaystyle{ T }[/math] can be a constant while entropy changes with respect to energy. So this is consistent.

Difficult

Question: Show that there are [math]\displaystyle{ \Omega }[/math] ways to distribute [math]\displaystyle{ q }[/math] indistinguishable quanta between [math]\displaystyle{ N }[/math] oscillators where [math]\displaystyle{ \Omega = \frac{(N+q-1)!}{q!(N-1)!} }[/math]

Solution: For illustration, let us first pick the [math]\displaystyle{ N=3 }[/math] and [math]\displaystyle{ q=2 }[/math] case and imagine the oscillators as buckets and the quanta as dots. The way we can distribute the quanta into the oscillators is shown below schematically

Instead of drawing it out, we can try and create a code that describes each configuration (triple bucket + dot set-up). Let's first imagine creating a "basis", something like this:

With this basis, we could say that the above case 4 can be written as AB. Case 5 is BC and case 6 is AC. Keep in mind that since quanta are indistinguishable, AC and CA are equivalent and so are every other reordering. To designate two dots in one bucket, we can introduce a third letter, D. A D means to add another ball into the bucket in the combination with it, so case 1 is AD. All possible cases are AD, BD, CD, AB, BC, AC. Notice that these are all of the combinations of two letters from the set A,B,C,D.

Now let's take the more general case. If we have [math]\displaystyle{ N }[/math] buckets, we need at least [math]\displaystyle{ N }[/math] basis buckets. But, each bucket can have [math]\displaystyle{ q-1 }[/math] extra dots inside, in addition to the single basis bucket. So, to represent all combinations, we need [math]\displaystyle{ N+q-1 }[/math] "letters". In this scheme, we might say that each additional letter after the basis means to add an extra dot to the letter alphabetically the same with respect to its set to keep things unique. So if the basis is A,B,C then D means add an extra to A, and E would mean add an extra to C.

The amount of ways to distribute the dots is now [math]\displaystyle{ N+q-1 \choose q }[/math], since we can pick sets of [math]\displaystyle{ q }[/math] letters from the [math]\displaystyle{ N+q-1 }[/math] possibilities.

[math]\displaystyle{ {N+q-1 \choose q} = \frac{(N+q-1)!}{q!(N-1)!}=\Omega }[/math]

MATLAB Code

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

% You may change one of these 2 variables, keep in mind once q is over 100

% It may be hard to compute

q = 10; % Quanta of energy

N = 3; % Oscillators

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

f = @(q,N)((factorial(q + (N - 1)))/(factorial(q) * factorial(N-1)));

y = [];

for i = 1:q

y1 = f(i,N);

y = [y y1];

end

x = [1:q];

plot(x,y);

xlim([1 q]);

ylim([0, y(end)]);

xlabel('q (Quanta of Energy)');

ylabel('\Omega (Multiplicity)');

title('Multiplicity vs. Energy Quanta');

box on

grid on;

Connectedness

- How is this topic connected to something that you are interested in?

I am very interested in astrophysics, and there are very interesting cosmological analyses of entropy. For example, why do planets and stars form if they're more ordered than the interstellar medium they come from? Is the entropy of the universe really increasing? These topics have implications far beyond classical scales.

- How is it connected to your major?

I'm a Physics major.

- Is there an interesting industrial application?

I'm a Physics major. But, I'll still answer: of course! You might say entropy is the opposing force of efficiency. In history, it was discovered in the context of heat loss with work by Carnot. If we could create infinite-machines, the world would have considerably less problems.

Entropy is highly related to the concept of Quantized energy levels. The idea that energy can only be distributed in quanta between atoms is essential to understanding the idea of how many ways you can distribute energy in a system. If energy was continuous inside of atoms, there would be infinite ways to distribute energy into any system with more than one "oscillator" in the Einstein Model.

History

The concepts of Temperature, Entropy and Energy have been linked throughout history. Historical notions of Heat described it as a particle, such as Sir Isaac Newton even believing it to have mass. In 1803, Lazare Carnot (father of Nicolas Leonard Sadi Carnot) formalized the idea that energy cannot be perfectly channeled: disorder is an intrinsic property of energy transformation. In the mid 1800s, Rudolf Clausius mathematically described a "transformation-content" of energy loss during any thermodynamic process. From the Greek word τροπή pronounced as "tropey" meaning "change", the prefix of "en"ergy was added onto the term when in 1865 entropy as we call it today was introduced. Clausius himself said

I prefer going to the ancient languages for the names of important scientific quantities, so that they maymean the same thing in all living tongues. I propose, therefore, to call S the entropy of a body, after the Greek word "transformation". I have designedly coined the word entropy to be similar to energy, for these two quantities are so analogous in their physical significance, that an analogy of denominations seems to me helpful.

Two decades later, Ludwig Boltzmann established the connection between entropy and the number of states of a system, introducing the equation we use today and the famous Boltzmann Constant: the first major idea introduced to the modern field of statistical thermodynamics. [1]

Notable Scientists

- Josiah Willard Gibbs

- James Prescott Joule

- Nicolas Leonard Sadi Carnot

- Rudolf Clausius

- Ludwig Boltzmann

See also

All thermodynamics is linked to the concept of Energy. See also Heat, Temperature, Statistical Mechanics, Spontaneity. For Fun, see the Heat Death of the Universe

Further reading

External links

References

1. “Entropy.” Wikipedia, Wikimedia Foundation, 23 Apr. 2022, https://en.wikipedia.org/wiki/Entropy